Best Inference Platform for Open Models

High QualityReliableSecure

Trusted By

Advanced. Secure. Fast Open Models Now Available

Instantly access advanced open-source models optimized for quality, speed and security through API.

Full-stack AI

Deliver full-stack AI services from infrastructure to build, tune, and scale AI models

High-quality Inference

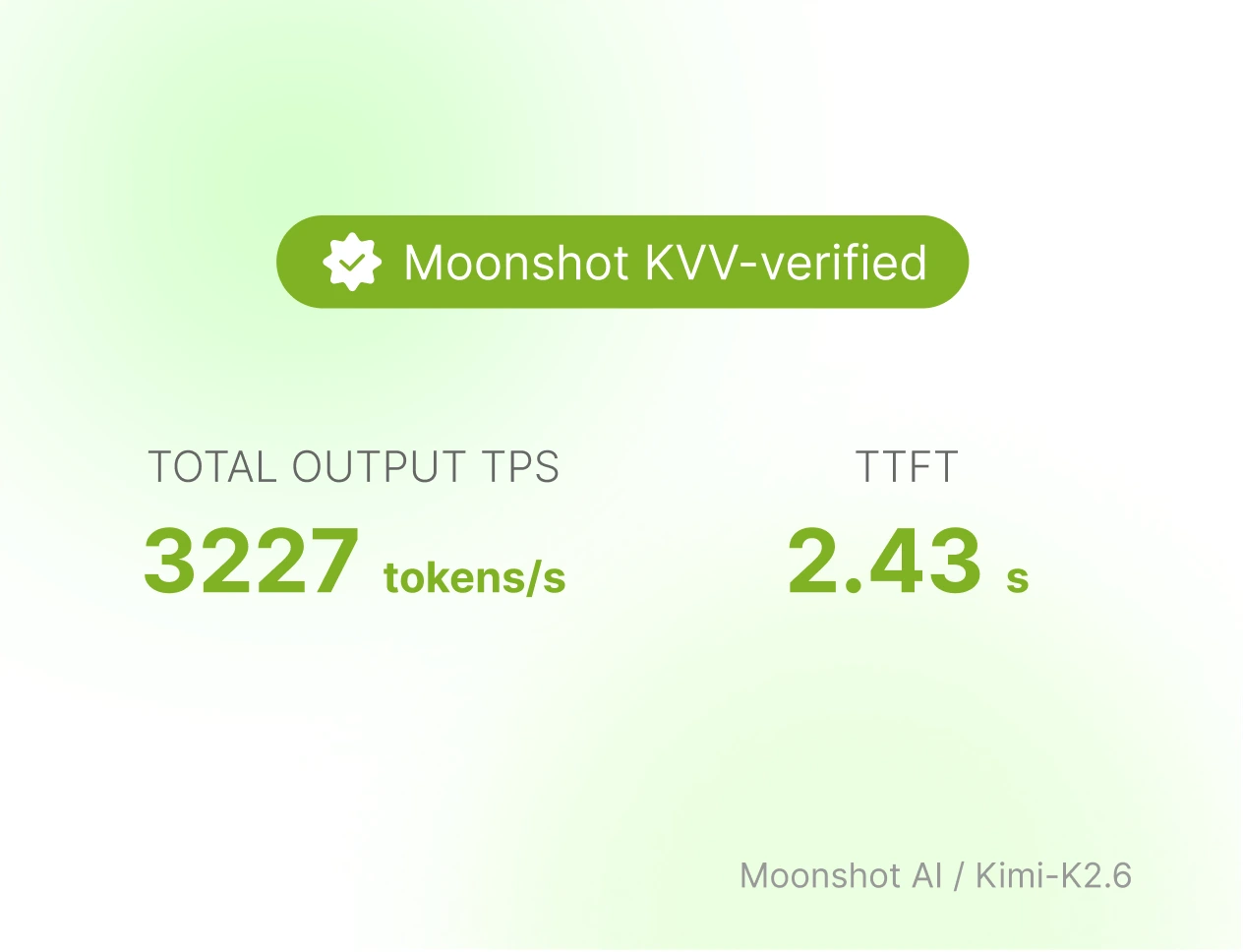

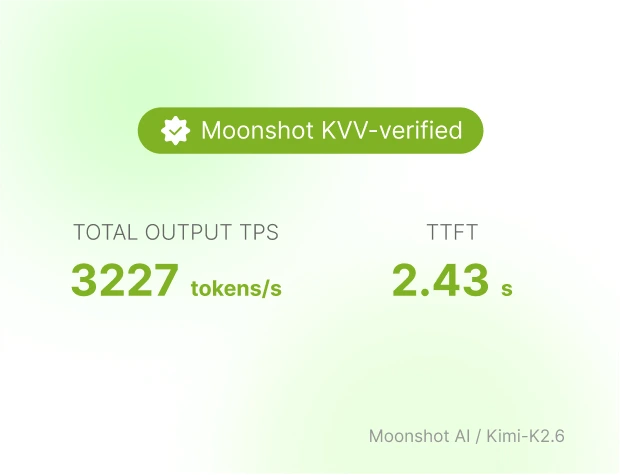

Kimi K2.6 Clusters have passed official KVV verification by Moonshot. It delivers stable low latency and high-performance inference even under high concurrency.

Reliable & Stable

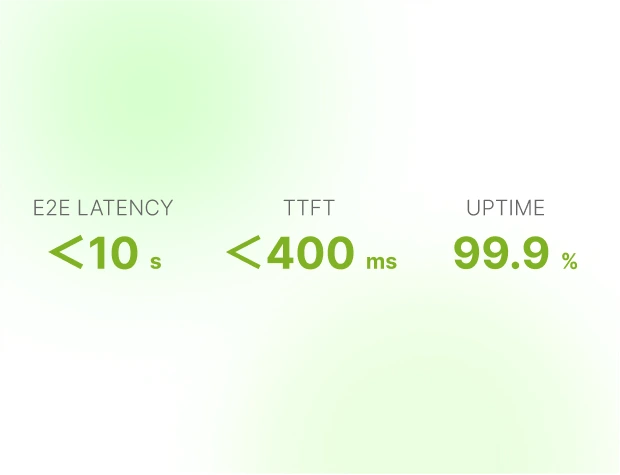

99.9% uptime, low latency — backed by advanced AI infra, in-house monitoring, 24/7 support.

Security and Data Privacy

SOC 2 certified, GDPR and HIPAA compliant in progress. Zero data retention, never used for training.

Inference

High-quality Inference

Kimi K2.6 Clusters have passed official KVV verification by Moonshot. It delivers stable low latency and high-performance inference even under high concurrency.

Reliable & Stable

99.9% uptime, low latency — backed by advanced AI infra, in-house monitoring, 24/7 support.

Security and Data Privacy

SOC 2 certified, GDPR and HIPAA compliant in progress. Zero data retention, never used for training.

AI Cloud

Available NVIDIA GPUs

Instantly allocated GPU cluster with ready-to-go AI stack

Storage

Our enterprise-class storage solutions are built on self-controlled hardware infrastructure and achieve technological differentiation through a four-layer architecture

Networking

Automated network configuration, private connectivity, and high-performance interconnects

Data Center Infrastructure

Design, Deploy, Manage GPU Clusters

Design and operate bulletproof AI infrastructure with a 99.9% uptime guarantee

Enterprise-grade infrastructure services: hardware sourcing, cluster bring-up, and deployment

Full cluster health monitoring prevents downtime proactively

Best Inference Platform for Open Models

Open Community

Designed to support advanced, secure, and fast open models.

High Quality

Powered by cutting-edge GPUs and optimized inference pipelines for fast, reliable production workloads.

Enterprise-Grade Trust

Models are hosted in our private cloud with full data isolation, SOC 2 compliant, zero data retention and no training usage.

Full Operational and Security Control

In-house clusters with real-time monitoring, diagnostics, and alerting to ensure GPU health, high SLA and utilization.

Technical Support

7*24*365 technical support and operational response to ensure stable cluster operation.

Full AI Stack

From AI infrastructure to build, tune, and scale AI models, we help enterprises move faster, operate smarter, and scale more efficiently.

Power Enterprise Success with Canopy Wave

Game AI Pricing: Token to Subscription

Canopy Wave designed a subscription pass system for Pax Historia, converting volatile AI token costs into predictable fixed pricing, allowing the gaming platform to scale usage without margin erosion.

AI & GPU Cloud for UCSD Research

Canopy Wave provisioned UCSD's research team with on-demand H100 GPU clusters, powering large-scale NLP analysis of federal procurement data and compressing research cycles from weeks to days.

Accelerating BioAI with GPUaaS

Canopy Wave equipped Foundry BioSciences with H100 clusters for protein engineering workloads, cutting compute costs while maintaining 24/7 uptime to scale complex simulations.

Game AI Pricing: Token to Subscription

Canopy Wave designed a subscription pass system for Pax Historia, converting volatile AI token costs into predictable fixed pricing, allowing the gaming platform to scale usage without margin erosion.

AI & GPU Cloud for UCSD Research

Canopy Wave provisioned UCSD's research team with on-demand H100 GPU clusters, powering large-scale NLP analysis of federal procurement data and compressing research cycles from weeks to days.

Accelerating BioAI with GPUaaS

Canopy Wave equipped Foundry BioSciences with H100 clusters for protein engineering workloads, cutting compute costs while maintaining 24/7 uptime to scale complex simulations.